At the start of the Covid-19 pandemic, I worked with a team to develop a video streaming service for live performance organizations. We built a platform from the ground up, working quickly to help organizations create virtual engagement as their doors closed and their audiences dissolved.

In the process, ideas were flying around like sparrows at sunset, jockeying for position on the rapidly-evolving planning board. Some elements of the development were obvious — if you’re going to sell a ticket for something, you need a way to validate that ticket, and if you’re doing ticket validation, well, you can also track usage and metrics to understand attendance patterns. There were many similar cases of taking established norms from the live performance world and mirroring them in the virtual world, and plenty of examples from video platforms that had existed long before the pandemic. Watching content online was not exactly new in 2020, after all.

What was new was virtual content as a replacement for live experiences for an entire industry, however temporary. Plenty of arts organizations and artists felt strongly that watching a play or an orchestral performance on a tv or phone screen wasn’t a valid way to experience the art; there are shelves of books written about the purpose and value of live performance.

Some organizations lacked access to the technology and expertise required to produce virtual events at the level they and their audiences expected. Even organizations that were able to create high quality virtual content found themselves facing another problem: lack of human connection.

Anyone who’s spent time around theatre or comedy knows the difference an audience can make to the performance. Live art at its best is symbiotic, with the performer and the audience responding to one another. If that weren’t the case, we’d all prefer to watch filmed content, and artists would prefer to perform in their basements. No shade to film buffs or folks who dislike being in crowds: there are plenty of ways to have an authentic artistic experience or enjoy a performance. I think we can mostly agree, though, that it’s difficult to be a stand-up comedian if you’re on stage in a room by yourself and nobody laughs at your jokes.

Live comedy was the example that started my development team down a very slippery slope. Comedians need to see their audiences’ reactions in real time to adapt their pacing and content in response. Not only that, but people laugh more when the people around them are laughing: comedy is literally funnier when you experience it communally. This premise opened up a lot of experimentation, and if you attended a virtual comedy performance on any platform during the pandemic, I’m sorry: I think I can speak for the virtual events development world briefly here and say that this is a real sticky problem to solve and we all tried a whole bunch of things, many of which didn’t work. For example…

Maybe seeing the reactions isn’t so important — what if the performer could hear the audience laughing, and the audience could hear each other? Let’s enable two-way audio!

No. This is terrible. It’s like the laugh track from early sit-coms, only worse. Imagine a laugh track mixed with the audio from an all-company Zoom meeting where someone is off mute yelling at their dog while the CEO presents the budget. Side note: this is exactly why sit-coms had those laugh tracks, and yes, we considered adding those, too.

Ok, hearing extra audio is too distracting. Maybe the performer wants to see audience members laughing! We could ask people to turn on their webcams and show them to the performer and to each other!

This one actually works… if you’re doing a Zoom meeting with eight of your biggest fans. When you’re streaming content to hundreds or thousands of people, it’s not great — trust me, we tried. Two-way video in real time is tricky, and you really don’t want to see motionless faces when you crack a big joke, followed by smiles and laughs a few seconds later. That sounds like the setup for a dystopian film about an evil tech overlord trying to slowly destroy a comedian’s mind via gaslighting.

Once you solve the two-way real-time video thing, how do you display the result? Do you put a big screen in the studio with the comedian and fill it with a ton of little tiles? How big do the tiles need to be for them to be visible and useful to the performer? What if you don’t have a big screen (or a studio, for that matter)?

Perhaps you do a rotating scroll bar of 8 or so tiles. How do you decide which audience members to include? Random selection is easiest, but we’re not all in a shared physical space with societal expectations and a bouncer at the door. Somebody in the virtual audience is definitely sitting around in their underwear or ignoring the screen and yelling at their dog. Do you want to have that person (or best case scenario, empty chair) front-and-center in the performer’s view?

Are you showing videos of audience members to the full audience, too? We want connection, but do we want a jumbotron effect when people are watching from a variety of personal spaces? Is someone curating that audience video feed? Who, and what guidelines are they using?

Wow, this is more complicated than we thought. Ok, no video, no audio. I know: let’s have little emoji icons you can click to show reactions, like a smiley face or clapping hands! We can float those across a little screen for the performer: they’ll be real-time and there’s no way for someone to abuse that or make it weird, right? Oh, and we can have an optional chat feature, so audiences can talk to each other and compliment the artist!

Ok, well, yeah. Boring, but yes, fine. Social platforms have had these features forever, but sure, it seems to be more or less working, and people expect it.

Yeah, you’re right, that’s not innovative or exciting. Ok, we’ll get that working as a baseline, then we’ll come up with something REALLY COOL, you’ll see.

Pro tip: whenever somebody comes up with something REALLY COOL, perk up your ears and listen carefully. This is how exciting innovations happen, but it’s also a good time to practice your consequence scanning skills.

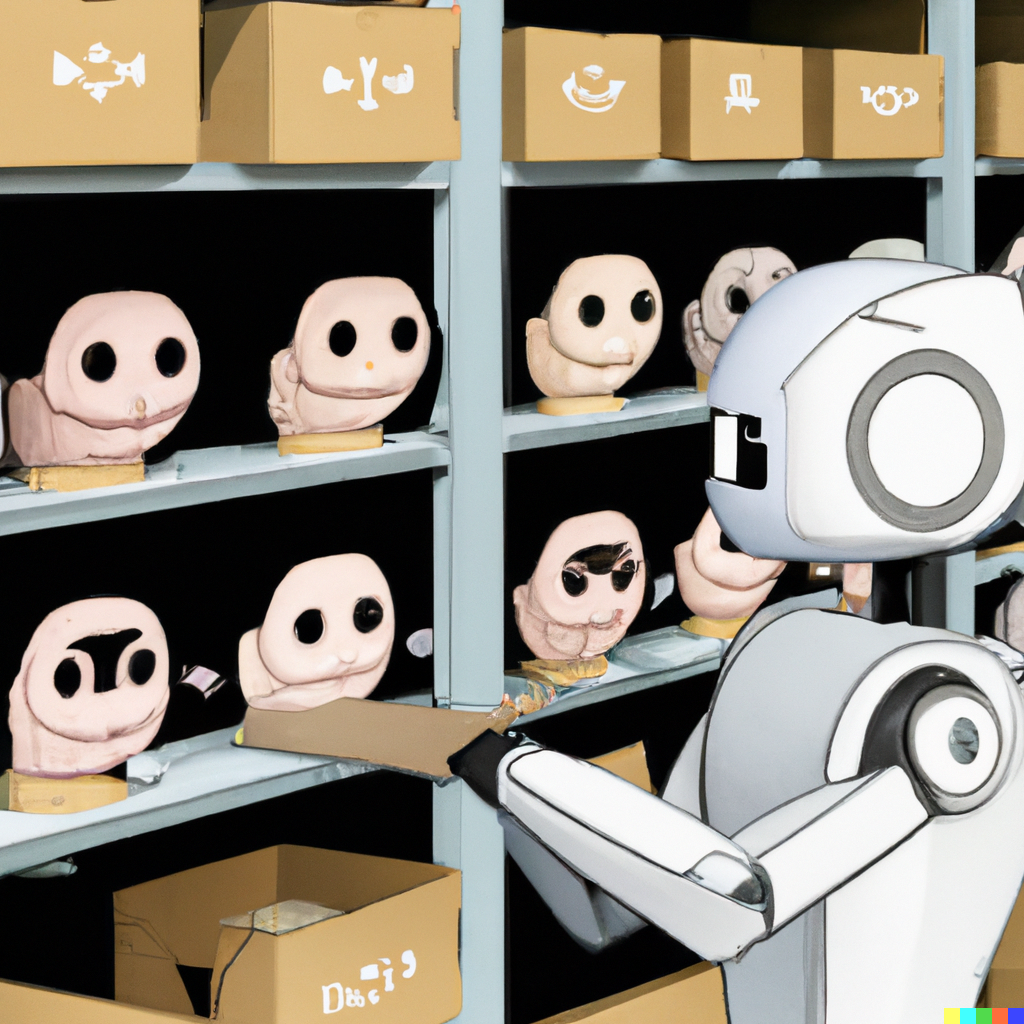

Ok, we’ve been experimenting with some ideas… we can integrate facial recognition technology! The tech is getting really good, so we’ll be able to tell when people are laughing, frowning, and so on. We can then decide how to show those reactions to the performer — maybe we show them some animated characters instead of the real humans, or maybe we use the data to decide who to show on the scrolling video tiles, or…

Hooooold on a second. Facial recognition tech? There are a lot of problems and privacy concerns in this ar…

No no it’s fine, we’ll make sure the viewer clicks a button to opt in or whatever, we can handle the privacy thing, it won’t be like we’re spying on them or anything, relax

Ok, this is still really concerning, I’m not comfortable wi…

Yeah we can make sure it’s super clear and above-board, don’t worry. Ok so anyway, facial recognition tech can also do SO MANY OTHER COOL THINGS! Like, it can recognize specific people, so the organization would know if a VIP is in the audience. It can also handle a lot of demographics, probably, so like we could use it to analyze audiences and see the gender, age, race distribution, and help orgs know what audiences they’re reaching virtually! We could even map that data back against the laughter responses to see which kinds of people react to which kinds of jok…

NOPE NOPE NOPE *deep breath* Ok, I see why you’re excited, and I know this seems really innovative, but let’s take a step back for a second and think about what this implies.

Are you going to trust an algorithm that was trained on an unclear dataset to identify “different kinds of people” based on categories that have a variety of built in biases? Do we believe that a person’s identity can be read by a machine based on their physical appearance? Would audience members be comfortable having a computer collect data about them and their reactions and apply identifying labels to them (even if we imagine that the data could somehow be accurate, which we know it is not)? There are regulations that govern the kinds of personally identifiable information (PII) you can collect and store, and we’re not even talking about collecting data from humans directly, we’re talking about assigning PII to humans without their knowledge or approval. And even if you’re able to finagle consent, would those humans be comfortable with the decisions an event producer might make based on this data?

Let’s imagine an example. If a computer says that audience members of a certain age and ethnic background didn’t “respond as well” as audience members who are older and white, will I assume that the older and white audiences are also more affluent, therefore a more desirable audience, therefore more worth pleasing, and will I avoid booking that artist in future and instead book someone more appealing to this audience? Or will I seek out new artists that may help diversify our audiences?

And if I’m tracking how audiences “respond”, let’s remember that this not only up to a computer with unclear bias interpreting facial data, but also up to me to correlate that questionable data against my own expectations of the “ideal response” to a performance. Will I question how the computer generated its results, or will I assume that as a computer it is impartial, smarter than me, and almost magical, and therefore whatever it says must be true? If I’m unlikely to question the dataset provided by the algorithm, will I also be less likely to question my own resulting conclusions and decisions?

Here’s another example. If a computer tells me that a specific person (perhaps a VIP) is watching my show, what will I do with that information? Will I send them a personalized followup email about the show (and if so, will they be flattered or creeped out about it — did they attend under their own name, or did they appear on a friend’s screen)? Am I going to post on their social media about how great it was to see them at the show? What if they didn’t want to be seen — perhaps the show’s content doesn’t match their public identity, and tagging them as an attendee would “out” them in a way that could be uncomfortable or even dangerous. And is it even the person the computer identified, or did the computer make a mistake?

These kinds of examples might seem extreme or paranoid, but as humans bringing new technologies into our world, we have a responsibility to consider the possible consequences of the systems we create. Using a risk-based approach can help us identify unplanned positive outcomes as well as unintended negative consequences, and is an essential element of responsible design.

My team decided that the risk outweighed the reward and we did not build facial recognition tools into our product. That doesn’t mean other companies would reach the same conclusion: perhaps a team with a different use case will find a way to involve this tech in a careful, consent-based, transparent and positive way. Unethical companies will also certainly build tools like facial recognition into their platforms without considering (or caring about) the risks and without gathering consent — we have plenty of examples of this sort of behavior.

Building ethics into the design process is crucial to empower ethical companies to reach the best outcomes, and this requires a robust ecosystem of specialists and tools to make it possible. In the US, the work happening at NIST to develop standards, guidelines, and frameworks is an important step, as is the leadership of large companies like Salesforce that are increasing visibility in this field. Balancing tools with regulation is also critical to protect against unethical actors: the EU’s digital strategy is a great example of a regulatory approach.

We live in exciting times for technological innovation; it’s up to all of us to decide how the next generation of systems will impact humans both now and into the future.